OpenAI is launching Sora, a generative text-to-video AI model. According to OpenAI, this new AI model will serve “as a foundation for models that can understand and simulate the real world, a capability we believe will be an important milestone for achieving AGI.”

As a diffusion model, Sora generates video through a process of transforming what appears to be static noise, allowing users to create detailed, photo-realistic videos up to one minute in length from written text prompts. According to the company, Sora can create “complex scenes with multiple characters, specific types of motion, and accurate details of the subject and background,” as well as “accurately interpret props and generate compelling characters that express vibrant emotions.”

Sora can be used to generate entire videos from scratch or extend the duration of existing videos. By providing the model with the foresight of multiple frames at once, OpenAI has tackled the challenge of maintaining continuity even when a subject momentarily disappears from view. This foresight ensures a smoother transition and continuity throughout the video generation process. Sora utilizes a transformer architecture similar to its GPT model predecessors. This architecture not only ensures scaling performance but also enables Sora to handle a wide range of video and image-based visual data.

Video Credit: OpenAI

Building off of research developed and implemented with earlier GPT models, Sora incorporates the recaptioning technique from DALL·E 3. This technique involves generating highly descriptive captions for visual training data, which allows Sora to follow text instructions provided by users.

Access to Sora is currently restricted to “red teamers” tasked with assessing potential risks associated with the new model. However, OpenAI has extended limited access to a handful of artists, designers, and filmmakers for feedback purposes. The company has noted that the current model may not accurately replicate the intricate physics of complex scenes and might struggle to correctly interpret certain cause-and-effect scenarios. Hence, their interest in implementing improves prior to the wider release.

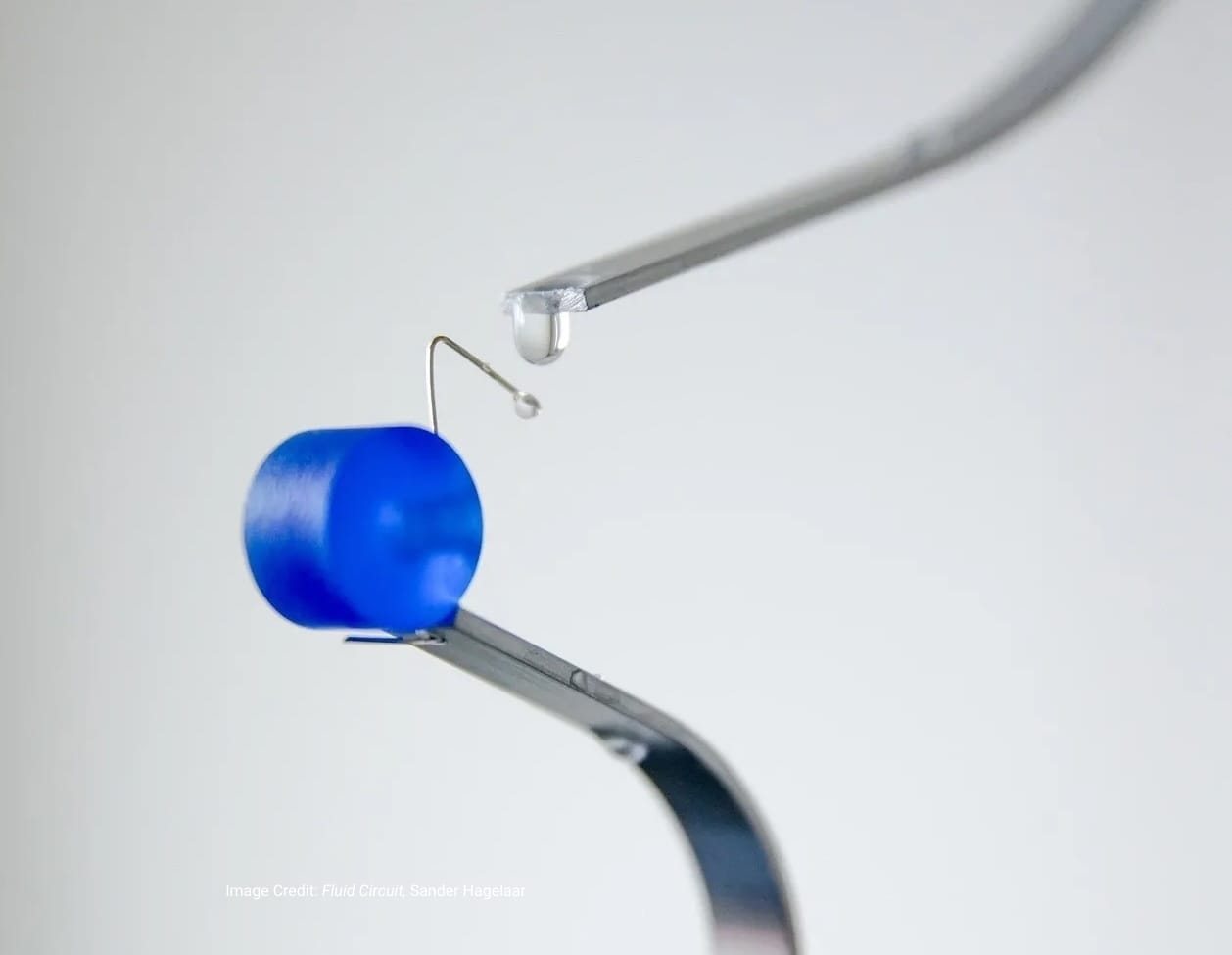

The incredible detail of the Sora-generated demo videos recently released by OpenAI is nothing less than impressive. But perhaps the most exciting feature of Sora is its capability to transform still images into dynamic temporal moments. With incredible accuracy and attention to detail, Sora can animate the contents of an image, turning it into an engaging video. Additionally, Sora can extend existing videos or fill in missing frames, making it an invaluable tool for content creators and filmmakers alike.

OpenAI is not the first company to venture into text-to-video generative AI models. A number of companies, including Runway and Pika, have shared their text-to-video models. Based on the comparable abilities of their models to generate videos from static images Google's Lumiere and Open AI are poised to become main rivals in this area.

As text-to-video AI models emerge and evolve, significant implications arise within the creative industry and for practitioners. These range from questions surrounding authorship and rights, which fuel the ongoing discourse on copyright and AI, to the potential for innovative storytelling formats in the future. Embracing these advancements while navigating the associated challenges will be essential for shaping the future landscape of creativity and technology.