President Joe Biden recently signed an executive order as an initial step toward safety guidelines for generative AI. This move signals the administration's commitment to regulating AI technology, albeit in the absence of comprehensive legislation.

The executive order outlines the following key objectives: creation of standards for AI safety and security, protecting privacy, improving equity and civil rights, safeguarding consumers, patients, and students, supporting the workforce, fostering innovation and competition, maintaining US leadership in AI, and promoting responsible government use of the technology.

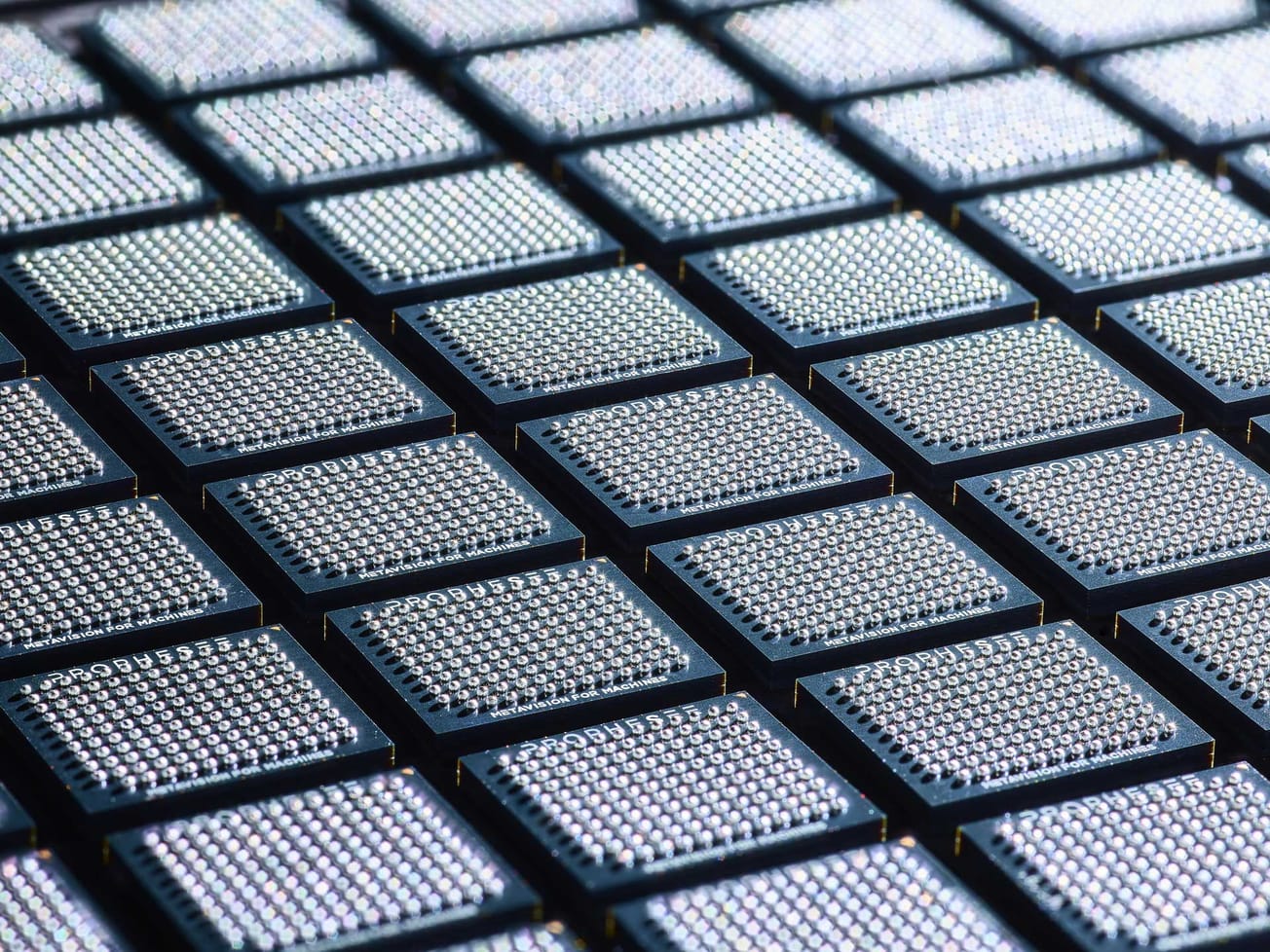

To achieve these objectives, specific government agencies have been tasked with various responsibilities. The National Institute of Standards and Safety (NIST) has been charged with developing standards for assessing AI models before they are made public. The Department of Energy and Department of Homeland Security will address potential AI threats to infrastructure and related risks, including nuclear and cybersecurity.

While a senior official from the Biden administration, in a briefing with reporters, clarified that these guidelines are primarily intended for the development of future models OpenAI's GPT and Meta's Llama 2 will be among the existing models affected.

The executive order also emphasizes the importance of transparency and safety in AI development. It assures that existing anti-discrimination rules will continue to apply to publicly available AI models without the intention of recalling them. Additionally, the order calls upon Congress to pass data privacy regulations and encourages the development of privacy-preserving techniques and technologies. It aims to prevent AI from being used for discriminatory purposes in various contexts, such as sentencing, parole, and surveillance.

The order also addresses concerns about job displacement by directing agencies to assess AI's impact on the labor market. It promotes AI growth by launching a National AI Research Resource, providing essential information to students, AI researchers, and support for small businesses. The executive order follows the previously released AI Bill of Rights, representing a set of principles that AI model developers should adhere to. These principles have been translated into agreements with leading AI companies, including Meta, Google, OpenAI, Nvidia, and Adobe.

Founder of Credo AI and a member of the National Artificial Intelligence Advisory Committee, Navrina Singh, applauded the move, stating, “It’s the right move for right now because we can’t expect policies to be perfect at the onset while legislation is still discussed. I do believe that this really shows AI is a top priority for government.” While an executive order does not represent permanent law and is typically limited to the current administration, it may prove to be a significant step toward establishing standards for generative AI. The conversation about comprehensive AI regulation is ongoing, with some lawmakers aiming to pass AI-related laws by the end of the year.