The rapid spread of open-source generative AI models is beginning to reshape how creative work is produced. Instead of relying exclusively on proprietary platforms, a growing number of artists, designers, and researchers are assembling their own machine-learning workflows using openly distributed models and software frameworks. The shift has less to do with the novelty of AI-generated images than with a deeper transformation in the technical infrastructure of creative practice.

Much of this change traces back to the release of Stable Diffusion in 2022 by Stability AI and collaborators. Unlike many earlier generative models, Stable Diffusion was released with publicly accessible weights that allowed developers and researchers to run the model locally and modify its architecture. Within months, an extensive ecosystem of tools and interfaces had emerged around it, turning diffusion-based image generation into a flexible platform for experimentation. Today that ecosystem includes a wide range of software environments that allow users to construct modular AI pipelines, modify training datasets, and fine-tune generative models. What began as a technical release has contributed to a broader shift in how machine learning is integrated into creative workflows.

The Stable Diffusion Ecosystem

Stable Diffusion belongs to a class of generative systems known as diffusion models. These models learn to reconstruct images from noise through an iterative denoising process, gradually producing coherent visual structures. Because the model weights and code were released publicly, developers rapidly began adapting the system to different creative and technical uses.

One important piece of infrastructure supporting this experimentation is Hugging Face, a widely used platform that hosts thousands of machine-learning models and datasets. Through its model hub, researchers and developers can share modified versions of diffusion models, training checkpoints, and experimental architectures. Artists and independent studios frequently download these models directly and run them on local hardware, allowing them to experiment outside commercial AI services.

Alongside the models themselves, a growing set of interfaces has appeared that enable users to construct complex generative pipelines. Software environments such as ComfyUI, Automatic1111’s Stable Diffusion WebUI, and InvokeAI allow creators to build node-based workflows in which image generation can be combined with segmentation models, image conditioning systems, or post-processing stages. In these environments, the generative model becomes one component within a larger computational system. The result is a technical ecosystem in which machine learning operates less like a standalone application and more like programmable creative infrastructure.

Building Modular Generative Pipelines

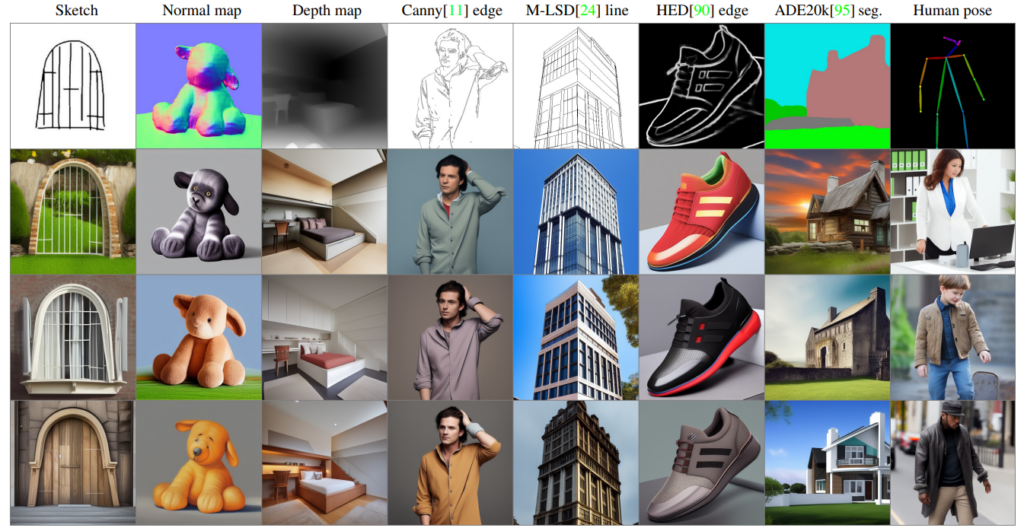

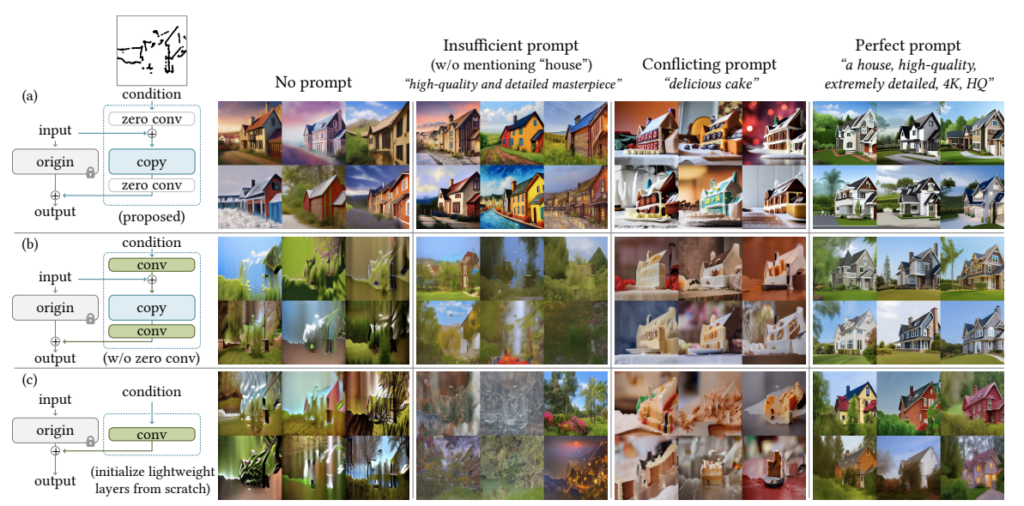

Node-based AI interfaces have become particularly influential because they allow users to construct visual workflows that resemble production pipelines used in animation or visual effects. Instead of issuing a single prompt to generate an image, users can chain together multiple processes: generating an image, modifying it through image-to-image diffusion, guiding the structure with pose estimation, or altering color and composition through additional model passes. One widely adopted extension of diffusion models is ControlNet, introduced in 2023 by Lvmin Zhang and collaborators. ControlNet allows diffusion systems to follow structural guidance derived from inputs such as edge maps, depth maps, or human pose estimation. This capability gives artists much finer control over the layout and composition of generated imagery.

In practical terms, a generative workflow might involve generating an initial image with a diffusion model, applying ControlNet to enforce compositional constraints, and then refining the output through multiple iterations of image-to-image transformation. Each stage of the process can be modified or replaced, turning the workflow itself into a configurable system. Because these pipelines are modular, they can be adapted to a wide range of creative contexts. Some practitioners use them to produce speculative architectural imagery, while others experiment with animation, motion graphics, or generative illustration. In each case the emphasis shifts away from isolated outputs and toward the design of computational processes.

Running AI Models Inside the Studio

Another significant development is the increasing number of creative studios running generative models locally. Advances in consumer graphics processing units (GPUs) have made it feasible for independent practitioners to run diffusion models on workstation hardware rather than relying entirely on cloud-based services. Running models locally offers several advantages. It allows users to modify models, test experimental architectures, and fine-tune systems using custom datasets. It also makes it possible to construct workflows that combine multiple machine-learning models in ways that would be difficult to implement within a closed platform.

Repositories hosted on Hugging Face distribute models in formats that can be downloaded and integrated into these local pipelines. Developers frequently publish checkpoints for fine-tuned models, allowing others to build upon previous work. The resulting ecosystem resembles open-source software communities, where tools evolve through iterative experimentation rather than centralized development. This distributed infrastructure has accelerated how quickly generative tools are adapted to specific creative needs. Rather than waiting for features to appear in commercial software, practitioners can modify the underlying systems themselves.

From Tools to Infrastructure

The rapid spread of open diffusion models suggests that generative AI may develop less like a single application and more like a layered technological infrastructure. At one level are the base models that generate images. Above them sit the interfaces that allow users to construct pipelines. Surrounding both are repositories, datasets, and software libraries that enable experimentation.

As these layers evolve, the role of creative practitioners is expanding. An increasing number of practitioners now work not only with images or visual compositions, but also with training datasets, generative pipelines, and machine-learning architectures. In this context, the studio begins to resemble a small research environment where models are tested, modified, and integrated into broader production systems.

The emergence of open generative AI ecosystems has not eliminated proprietary tools or platforms. Commercial AI services continue to dominate mainstream use. Yet the rapid development of open models has created an alternative pathway—one in which machine learning can be adapted, modified, and embedded directly into creative workflows. For those building with these systems, the most significant shift may not be the images produced by AI, but the growing ability to shape the tools that produce them.