Generative AI is typically experienced through screens: chat windows, image feeds, dashboards. Increasingly, though, machine learning systems are being embedded into physical objects—arcade cabinets, kinetic installations, responsive environments—where computation unfolds through motors, water, light, and mechanical movement rather than pixels.

This shift does not reject software. It relocates it. The interface is no longer only a touchscreen but a surface, a sensor array, a hinge, a grid of valves. The output is not just a rendered image but a physical change in space. The move from screen to object becomes visible in the work of Ross Goodwin, Random International, and Universal Everything.

Ross Goodwin: Machine Learning as Physical Apparatus

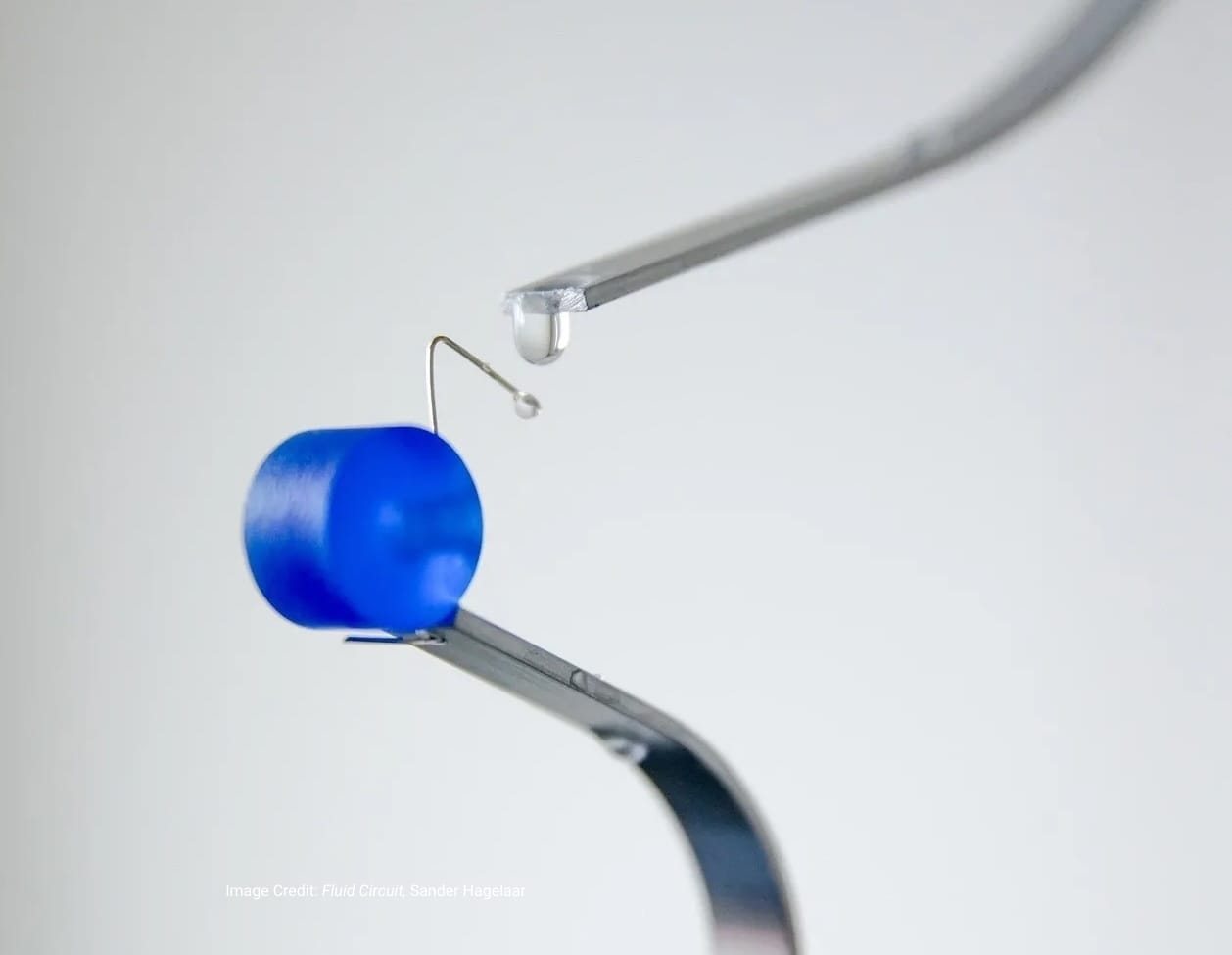

Ross Goodwin is known for experiments in computational writing. In 2017, he created 1 the Road, a book generated during a cross-country car trip using sensors connected to a recurrent neural network. Cameras, GPS, microphones, and a clock supplied real-time data to the system, which produced text continuously and printed it on receipt paper as the journey unfolded.

The setup occupied a passenger seat. It required cameras, wiring, computing hardware, and a thermal printer. Text was generated and printed live during the trip, foregrounding the mechanics of machine inference rather than hiding them behind an interface. The published book was compiled from that output. Goodwin has also exhibited installations in which AI-generated text is printed in real time, making the act of computation materially visible. Paper feeds. Ink accumulates. Lines appear sequentially. Instead of interacting with a seamless interface, viewers encounter a machine performing inference in physical space.

Here, AI is not a background service. It is equipment.

Random International: Responsive Environments

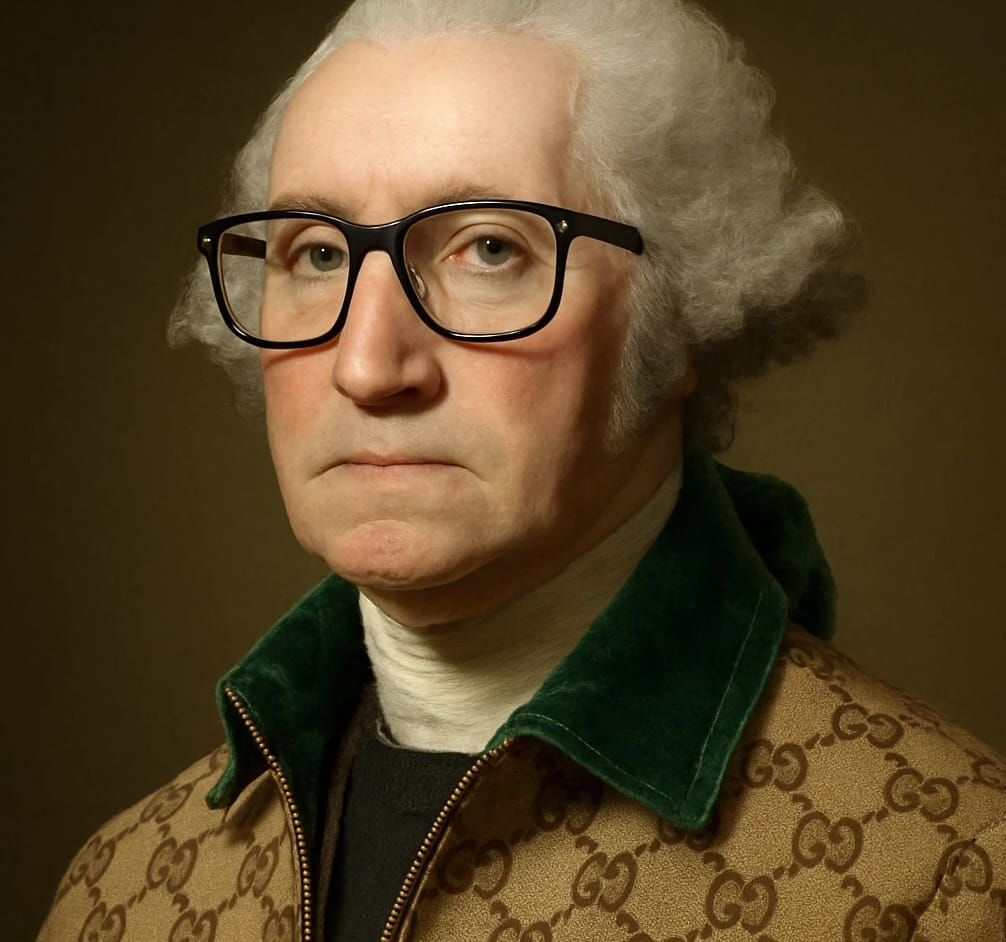

The London- and Berlin-based studio Random International has long worked with robotics, sensing, and responsive systems. Their still-infamous project from 2012, Rain Room, uses motion tracking and a ceiling-mounted grid of electronically controlled valves to regulate falling water. Visitors walk through a downpour that parts around their bodies in real time. Rain Room, is AI, but its operation depends on real-time sensing and control systems. Cameras detect a visitor’s position; software calculates a response; hardware actuates a dense grid of individually controlled water streams. The result is an environment that reacts to human movement without relying on screens or projections.

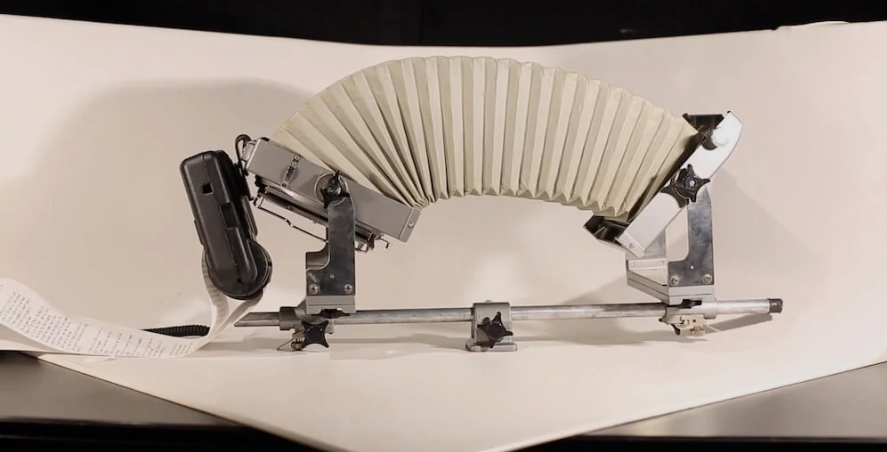

Subsequent projects extend this approach. Random International has continued to develop responsive, system-driven installations. In Life in our Minds: Motherflock (2023), a coordinated group of robotic light elements moves collectively in response to real-time behavioral logic. Individual units operate as part of a distributed system, adjusting orientation and intensity according to programmed rules and environmental input. Computation is expressed through motors, control systems, and structural assemblies rather than screens.

Interaction remains spatial and bodily. Viewers enter the environment, and the system responds through coordinated physical motion. The technology is embedded in infrastructure rather than presented as a graphical interface.

Universal Everything: AI in Motion Systems

The UK-based studio Universal Everything is known for large-scale digital character works and immersive installations. In recent years, the studio has incorporated machine learning into projects where movement and form are generated or influenced algorithmically rather than pre-rendered as fixed sequences. In exhibition and public-facing installations, generative systems determine how digital figures evolve in response to real-time inputs. In some contexts, these outputs extend beyond the screen into architectural lighting systems or sculptural LED arrays, where software directly controls physical illumination patterns.

Instead of playing back completed animation files, systems calculate motion live. Sensors supply data; algorithms determine variation; lighting and display hardware execute change. The display remains, but it functions as part of a broader computational apparatus tied to physical infrastructure.

From Interface to Infrastructure

Across these practices, a pattern emerges. AI is not confined to a graphical user interface. It is integrated into cabinets, rooms, lighting systems, and mechanical assemblies. This approach is also visible in custom-built interactive devices presented at technology conferences and design fairs, where rule-based or generative systems are embedded directly into playable hardware. Buttons trigger algorithms. Joysticks modify system behavior. LED grids visualize live computation. The system is experienced through physical controls rather than abstract menus. Physical interfaces impose constraints. Motors have torque limits. Water systems require calibrated pressure. Printers jam. These conditions shape how generative systems are engineered and deployed. Latency becomes perceptible. Malfunctions appear as mechanical disruptions rather than rendering artifacts.

Re-embedding AI into hardware alters perception. When a language model produces text in a browser, the process remains opaque. When a system prints text onto paper in real time, the sequence becomes legible: sensor input, computation, mechanical output. Cause and effect are easier to trace because the system occupies space. Embedded computation is also present in certain architectural and fabrication contexts. Research studios have developed responsive partitions, adaptive lighting arrays, and sensor-driven prototypes that adjust position or illumination based on environmental input. In these cases, sensing and control systems are integrated directly into structural components rather than mediated solely through apps. What distinguishes these projects is not novelty but placement. The model is not accessed exclusively through a browser window. It is situated within the object.

Generative systems without screens do not eliminate digital media. They redistribute it. Computation moves closer to actuators and sensors. Interfaces become spatial rather than exclusively graphical. The visible output is movement, light, water, or ink. For a field saturated with software demos and chat interfaces, this marks a measurable shift. AI is not only something prompted through text. It is something that occupies space, consumes power, and moves matter.

Not on a screen. In the room.