Brain–computer interfaces (BCIs) have traditionally been developed within medical and neuroscience research. Systems capable of translating electrical activity in the brain into machine-readable signals have enabled patients to control prosthetic limbs, communication interfaces, and computer cursors through neural activity. Over the past decade, artists and designers have also begun experimenting with these technologies, exploring how neural signals can function as expressive inputs rather than purely clinical data.

Advances in affordable electroencephalography (EEG) headsets and open-source signal processing frameworks have made neural sensing increasingly accessible outside laboratories. Although EEG devices cannot decode thoughts, they can detect measurable patterns associated with attention, relaxation, and cognitive effort. Within creative practice, these signals are often treated not as precise commands but as generative inputs capable of influencing visual systems, sound synthesis, or responsive environments.

Across contemporary media art, neural interfaces are emerging as instruments that translate cognitive signals into visual, sonic, and spatial media. Early experiments in biofeedback art during the 1960s and 1970s similarly explored how physiological signals—heart rate, brainwaves, or skin conductance—could be translated into audiovisual systems. Today’s BCI-based artworks extend this lineage, combining neural sensing with machine learning, real-time computation, and networked media environments.

EEG Signals as Visual and Physical Media

One of the most direct artistic uses of EEG appears in the work of artist Lisa Park, whose installations translate brain activity into physical phenomena. Park frequently uses consumer EEG headsets to capture neural signals associated with shifts in attention and cognitive engagement.

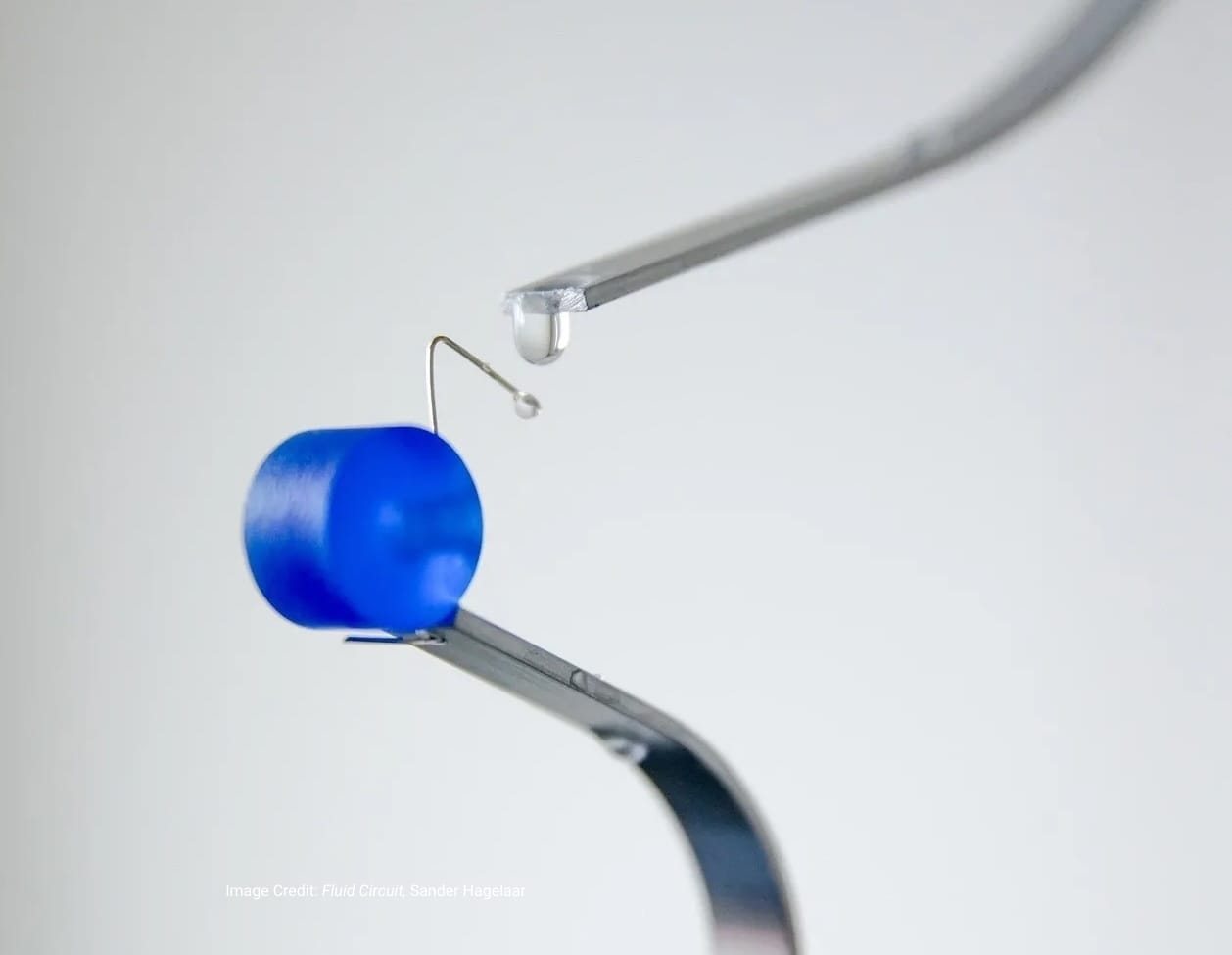

In the performance installation Eunoia (2013), Park wears an EEG device while seated among a circular array of water-filled vessels. Each vessel is connected to a vibration transducer that responds to changes in the EEG signal. As the performer attempts to regulate her mental focus, the vibrations create visible ripples across the water’s surface. The system does not attempt to interpret specific thoughts. Instead, it converts fluctuations in neural activity into subtle physical transformations. The resulting patterns make cognitive dynamics visible, translating otherwise imperceptible neural activity into an observable environmental effect.

Park’s work demonstrates how neural interfaces can function less as instruments of control than as mechanisms for externalizing cognition. By turning EEG signals into physical motion, the installation foregrounds the fluctuating nature of neural activity while revealing how internal mental states can shape responsive systems.

Park extended this approach in the installation Blooming (2015), which translates EEG signals into kinetic movement within a spatial environment. In the work, an EEG headset captures fluctuations in neural activity associated with attention and mental engagement. These signals are transmitted to mechanical devices that animate artificial flowers placed throughout the installation.

As the participant’s brain activity shifts, the flowers open and close in response to variations in the EEG data. The installation creates a feedback loop between internal cognitive states and the surrounding environment. Small changes in attention or mental effort become visible through the movement of the flowers. Like Eunoia, Blooming does not attempt to decode thoughts. Instead, the system translates measurable neural patterns into a responsive physical system. The work highlights the instability and interpretive nature of neural signals while demonstrating how cognitive data can be transformed into expressive media.

Neural Signals in Computational Media

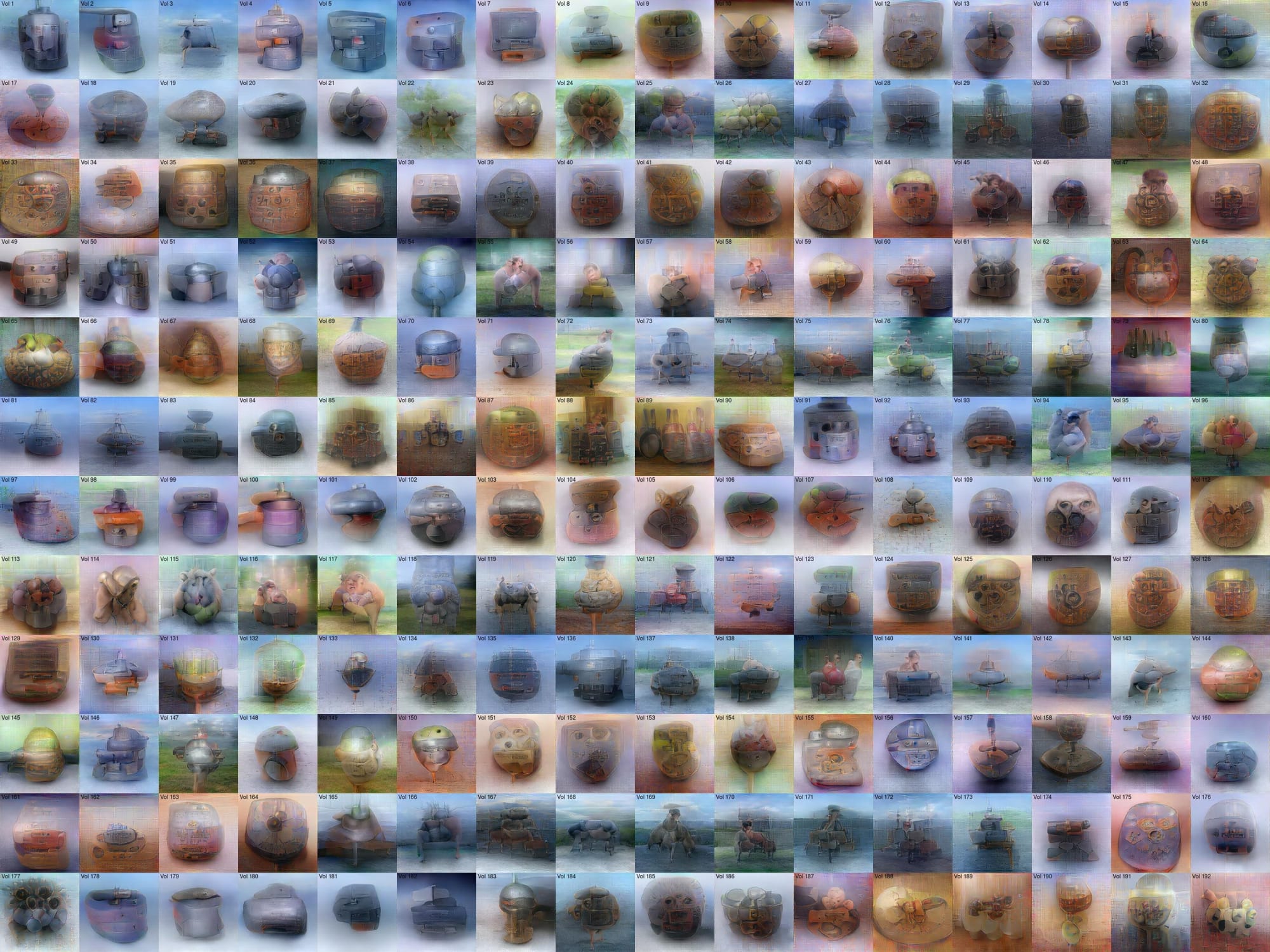

Artist and technologist Daito Manabe, founder of Rhizomatiks, investigates how neuroscience research and machine learning intersect with computational media. His work often examines how physiological and perceptual data can be translated into visual systems. Dissonant Imaginary (2019), developed by Rhizomatiks Research in collaboration with neuroscientist Yukiyasu Kamitani, draws on Kamitani’s research into neural decoding, where machine-learning models analyze patterns of brain activity associated with visual perception.

Rather than functioning as a conventional brain–computer interface, the work explores how neural data can be computationally interpreted and represented. Visual systems developed for the project reference research that reconstructs or predicts images from brain activity, highlighting how machine-learning models translate neural signals into visual information. By connecting neuroscience research with generative media systems, the project reflects the growing intersection between brain research and experimental media practice.

Human–Machine Perception and Cognitive Systems

Artist Justine Emard investigates how artificial intelligence and sensing technologies interpret human presence. Her installations frequently stage encounters between human bodies and intelligent systems capable of analyzing movement, gesture, and behavioral patterns.

In Co(AI)xistence (2017), developed with researchers associated with the Okinawa Institute of Science and Technology, Emard presents an interaction between a human dancer and a robotic arm equipped with AI-based vision systems. The system observes the dancer’s gestures through cameras and computational sensing technologies, generating responsive movements that unfold throughout the performance.

Rather than translating neural signals directly into media output, the project explores how machines construct representations of human behavior through sensor data and machine learning models. The installation highlights the interpretive frameworks through which computational systems perceive and model human activity. By situating the body within networks of sensors and intelligent systems, Emard’s work reflects the broader technological landscape in which neural interfaces are emerging. Brain signals represent only one layer within a growing ecosystem of biometric data streams captured and interpreted by computational systems.

Across these practices, neural interfaces appear less as tools for reading minds than as instruments that translate cognition into data streams capable of shaping media systems. The result is not mind-reading but a new class of responsive technologies in which attention, perception, and mental effort become parameters within computational environments.